Started tweaking the settings, after reading the documentation and talking to other users of DD.

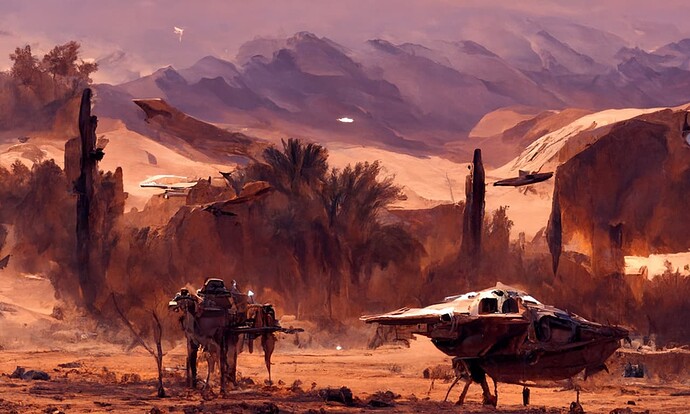

Here are some results.

I like how i managed to achieve finer details, however i believe i lost some abstraction which was nicer on the previous images. The prompt was tweaked somewhat too. I had to reduce image resolution for now to accelerate generation, while still exploring the settings. My goal is to achieve art style something between Disco Elysium and Guild Wars 2. Abstraction yet with some details. Once that is done i will start generating other motives. For now Desert Planet is the baseline.

Settings of this run

I forgot to save the seed number >.< well, consider these to be NFT’s now lol.

{

"text_prompts": {

"0": [

"A beautiful painting of sci-fi starship, standing in the desert landscape, by greg rutkowski and thomas kinkade, Trending on artstation.",

"brown color scheme"

],

"100": [

"Dust storm is approaching in the horizon",

"Heavy winds"

]

},

"image_prompts": {},

"clip_guidance_scale": 10000,

"tv_scale": 0,

"range_scale": 150,

"sat_scale": 0,

"cutn_batches": 8,

"max_frames": 10000,

"interp_spline": "Linear",

"init_image": null,

"init_scale": 1000,

"skip_steps": 10,

"frames_scale": 1500,

"frames_skip_steps": "60%",

"perlin_init": false,

"perlin_mode": "mixed",

"skip_augs": false,

"randomize_class": true,

"clip_denoised": false,

"clamp_grad": true,

"clamp_max": 0.05,

"seed": 1031775764,

"fuzzy_prompt": false,

"rand_mag": 0.05,

"eta": 0.8,

"width": 768,

"height": 512,

"diffusion_model": "512x512_diffusion_uncond_finetune_008100",

"use_secondary_model": true,

"steps": 500,

"diffusion_steps": 1000,

"diffusion_sampling_mode": "ddim",

"ViTB32": true,

"ViTB16": true,

"ViTL14": false,

"ViTL14_336px": false,

"RN101": false,

"RN50": true,

"RN50x4": false,

"RN50x16": false,

"RN50x64": false,

"cut_overview": "[12]*400+[4]*600",

"cut_innercut": "[4]*400+[12]*600",

"cut_ic_pow": 1,

"cut_icgray_p": "[0.2]*400+[0]*600",

"key_frames": true,

"angle": "0:(0)",

"zoom": "0: (1), 10: (1.05)",

"translation_x": "0: (0)",

"translation_y": "0: (0)",

"translation_z": "0: (10.0)",

"rotation_3d_x": "0: (0)",

"rotation_3d_y": "0: (0)",

"rotation_3d_z": "0: (0)",

"midas_depth_model": "dpt_large",

"midas_weight": 0.3,

"near_plane": 200,

"far_plane": 10000,

"fov": 40,

"padding_mode": "border",

"sampling_mode": "bicubic",

"video_init_path": "/content/drive/MyDrive/init.mp4",

"extract_nth_frame": 2,

"video_init_seed_continuity": false,

"turbo_mode": false,

"turbo_steps": "3",

"turbo_preroll": 10,

"use_horizontal_symmetry": false,

"use_vertical_symmetry": false,

"transformation_percent": [

0.09

],

"video_init_steps": 100,

"video_init_clip_guidance_scale": 1000,

"video_init_tv_scale": 0.1,

"video_init_range_scale": 150,

"video_init_sat_scale": 300,

"video_init_cutn_batches": 4,

"video_init_skip_steps": 50,

"video_init_frames_scale": 15000,

"video_init_frames_skip_steps": "70%",

"video_init_flow_warp": true,

"video_init_flow_blend": 0.999,

"video_init_check_consistency": false,

"video_init_blend_mode": "optical flow"

}